GPJATK Dataset – Multi-View Video and Motion Capture Dataset

GPJATK Dataset – Multi-View Video and Motion Capture Dataset

The GPJATK dataset has been designed for research on vision-based 3D gait recognition. It can also be used for evaluation of the multi-view (where gallery gaits from multiple views are combined to recognize probe gait on a single view) and the cross-view (where probe gait and gallery gait are recorded from two different views) gait recognition algorithms. In addition to problems related to gait recognition, the dataset can also be used for research on algorithms for human motion tracking and articulated pose estimation.

Data description

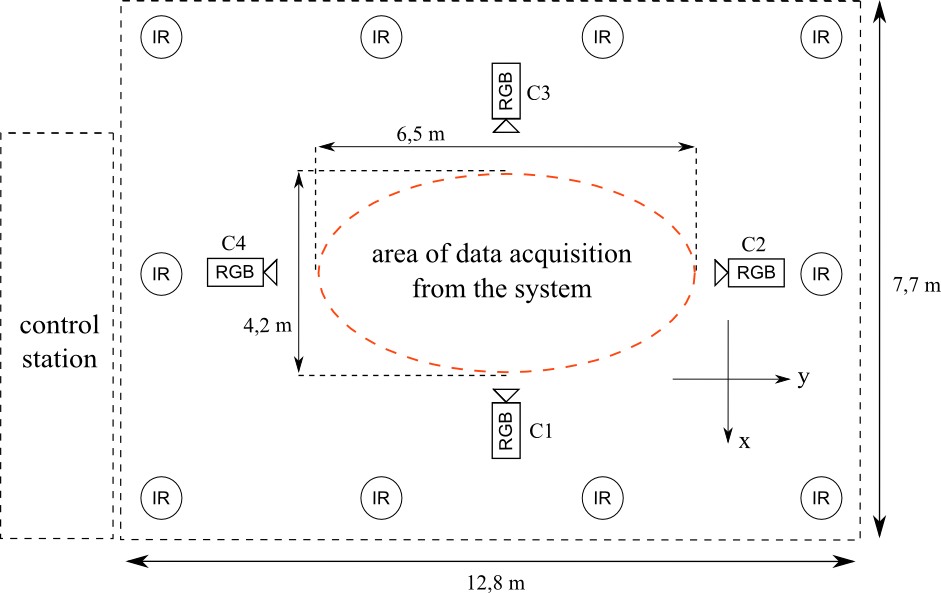

The GPJATK dataset contain data captured by 10 mocap cameras and four calibrated and synchronized video cameras. The 3D gait dataset consists of 166 data sequences, that present the gait of 32 people (10 women and 22 men). In 128 data sequences, each of individuals was dressed in his/her own clothes, in 24 data sequences, 6 of the performers (person #26-#31) changed clothes, and in 14 data sequences, 7 of the performers attending in the recordings had a backpack on his/her back. Each sequence consists of four videos with RGB images with a resolution of 960×540, which were recorded by synchronized and calibrated cameras with 25 frames per second, together with the corresponding moCap data. The mocap data were registered at 100 Hz by Vicon system consisting of 10 MX-T40 cameras.

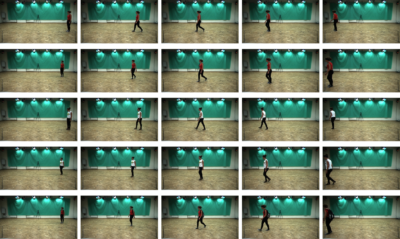

During the recording session, the actor has been requested to walk on the scene of size 6.5 m × 4.2 m along a line joining the cameras C2 and C4 as well as along the diagonal of the scene. In a single recording session, every performer walked from the right to left, then from left to right, and afterwards on the diagonal from upper-right to bottom-left and from bottom-left to upper-right corner of the scene. Some performers were also asked to attend in additional recording sessions, i.e. after changing into other garment, and putting on a backpack.

First row: a walk from left to right, second row: a walk on diagonal from upper-right to bottom-left, third and four rows: walks in another clothes from left to right and on diagonal from upper-right to bottom-left, respectively, fifth row: a walk on diagonal from upper-right to bottom-left with a backpack.

Project participants

Konrad Wojciechowski (Polish-Japanese Academy of Information Technology)

Bogdan Kwolek (AGH University of Science and Technology)

Adam Świtoński ( Silesian University of Technology)

Tomasz Krzeszowski (Rzeszow University of Technology)

Henryk Josiński (Silesian University of Technology)

Agnieszka Michalczuk (Polish-Japanese Academy of Information Technology)

Acknowledgements

The recordings were made in the years 2012-2014 in the Human Motion Lab (Research and Development Center of the Polish-Japanese Academy of Information Technology) in Bytom as part of the projects: 1) „System with a library of modules for advanced analysis and an interactive synthesis of human motion” co-financed by the European Regional Development Fund under the Innovative Economy Operational Programme – Priority Axis 1; 2) OR00002111 financed by the National Centre for Research and Development (NCBiR).

Related publications

- Kwolek, B., Michalczuk, A., Krzeszowski, T., Switonski, A., Josinski, H., Wojciechowski, K.: Calibrated and Synchronized Multi-View Video and Motion Capture Dataset for Evaluation of Gait Recognition, 2019.

- Switonski, A., Krzeszowski, T., Josinski, H., Kwolek, B., Wojciechowski, K.: Gait recognition on the basis of markerless motion tracking and DTW transform. IET Biometrics, vol. 7, iss. 5, p. 415–422, 2018. doi:10.1049/iet-bmt.2017.0134

- Krzeszowski, T., Switonski, A., Kwolek, B., Josinski, H., Wojciechowski, K.: DTW-Based Gait Recognition from Recovered 3-D Joint Angles and Inter-ankle Distance. Int. Conf. on Computer Vision and Graphics 2014 (ICCVG 2014), LNCS, vol. 8671, pp. 356-363, Springer Berlin / Heidelberg, 2014. [Springer]

- Kwolek, B., Krzeszowski, T., Michalczuk, A., Josinski, H.: 3D Gait Recognition Using Spatio-Temporal Motion Descriptors. The 6th Asian Conference on Intelligent Information and Database Systems (ACIIDS 2014), LNCS, vol. 8398, pp. 595-604, Springer, 2014. [Springer]

- Krzeszowski, T., Kwolek, B., Michalczuk, A., Switonski, A., Josinski, H.: Gait Recognition Based on Marker-less 3D Motion Capture. 10th IEEE Int. Conf. on Advanced Video and Signal Based Surveillance 2013 (AVSS 2013), pp. 232-237, Krakow, 2013. [IEEE Xplore]

- Krzeszowski, T., Kwolek, B., Michalczuk, A., Switonski, A., Josinski, H.: View Independent Human Gait Recognition using Markerless 3D Human Motion Capture. Int. Conf. on Computer Vision and Graphics 2012 (ICCVG 2012), LNCS, vol. 7594, pp. 491-500, Springer Berlin / Heidelberg, 2012. [Springer]

- Rymut, B., Kwolek, B., Krzeszowski, T.: GPU-Accelerated Human Motion Tracking Using Particle Filter Combined with PSO. Advanced Concepts for Intelligent Vision Systems 2013, LNCS, vol. 8192, pp. 426-437, Springer, 2013. [Springer]

- Krzeszowski, T.: Human motion tracking using multiple cameras. (in Polish) PhD Thesis, Silesian University of Technology, Gliwice, Poland, 2013.

- Kwolek, B., Krzeszowski, T., Gagalowicz, A., Wojciechowski, K., Josinski, H.: Real-Time Multi-view Human Motion Tracking Using Particle Swarm Optimization with Resampling. 7th Int. Conf. on Articulated Motion and Deformable Objects 2012, LNCS, vol. 7378, pp. 92-101, Springer Berlin / Heidelberg, 2012. [Springer]

- Krzeszowski, T., Kwolek, B., Wojciechowski, K., Josinski, H.: Markerless Articulated Human Body Tracking for Gait Analysis and Recognition. Machine Graphics & Vision, vol. 20, no. 3, pp. 267-281, 2011.

dataset access

If you are consent with GPJATK Dataset Release Agreement you may download the whole data set from HERE (7GB). For any questions, comments or other issues please contact Tomasz Krzeszowski.